We are excited to introduce the SELMA Open-Source Software (OSS) Platform, developed by IMCS at the University of Latvia. SELMA OSS offers effective means to test and compare the performance of various language models used in multilingual media monitoring and content production. The open-source tool (also referred to as Use Case 0, or The Basic Testing and Configuration Interface) provides:

- automatic speech recognition (ASR) from audio/video files,

- punctuation and capitalization of the transcribed text,

- machine translation into a target language,

- text-to-speech synthesis (TTS) and

- voice conversion for voice-over generation.

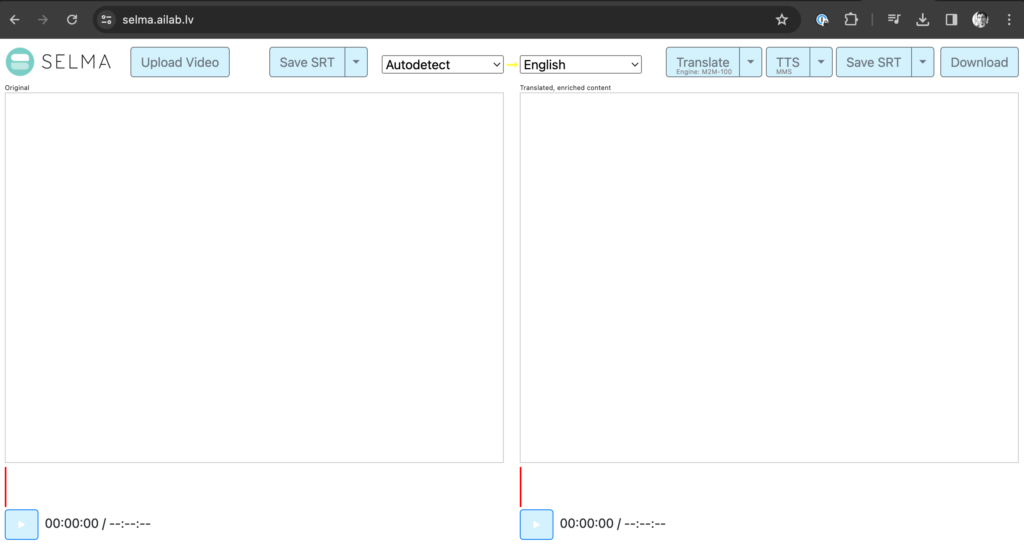

The platform has evolved through several generations, and its most current version is available at https://selma.ailab.lv.

For whom is it for?

In fact, the SELMA OSS platform can be used not only by developers in order to combine and test alternative language models before they are integrated into the end-user applications – it can also be used as an entry-level application by journalists and media producers themselves to transcribe their recordings, generate subtitles and voice-over, or to generate a podcast from an input text.

Dive Deeper: How it works

Speech Recognition and Transcription

Typically, the workflow starts with uploading a video file. When the file is uploaded, a default speech recognition model is applied instantly. First, it automatically detects the language which can be overridden by the user. Shortly after, an automatic transcription is produced and presented in a text box on the left. The user can skim through the transcription and correct ASR errors. The text can also be re-segmented into a different paragraph split to fit the limited subtitle space.

Machine Translation

Once the transcript is correct, the user can proceed with machine translation (MT) into one of the 30+ supported languages. The translated text, presented in a text box on the right, can be post-edited to resolve MT errors. Note that the segmentation and timestamps of the translated text correspond to the segmentation and timestamps of the original text and should not be altered, which is crucial for the correct subtitle generation. Also note that if there are subtitles (SRT) available for the video beforehand they can be uploaded in the left or right text-box thus avoiding the ASR or MT steps and the respective post-editing efforts.

Voice-Over

Once the translation (subtitles) is done, the user can generate a text-to-speech voice-over from this translation. The voice-over is automatically aligned with the subtitles.

The user can also change the voice of the artificial speaker to the voice of another speaker. For instance, the synthesized voice in the target language can be made similar to the original voice of the source language. To make this happen, a short recording of the desired voice must be uploaded.

Podcast Generation

Instead of creating subtitles and voice-over for a video, the workflow for podcast generation starts with plain text either in the source language (copy-pasted in the text box on the left) or directly in the target language (copy-pasted in the text box on the right).

The result – the generated audio file (WAV) as well as the subtitle files (SRT) in the source and target languages – can be downloaded.

Overview

A short overview of the functions is summarized in this short run-through:

Very important: Your data stays safe

Finally, it should be noted that the public demonstrator of the SELMA OSS platform does not require registration and authentication nor does it store any content, original or generated, after the session is closed by the user.